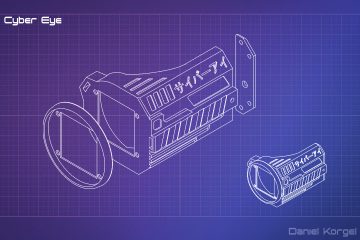

An animated Cyberpunk eyepiece, which looks around and blinks.

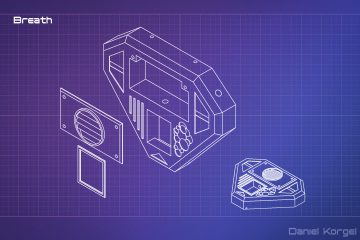

The D-KOR-Tec Breath is an external lung implant which uses a highly efficient gen-modified plant, to produce oxygen by natural photosynthesis. The plant is contained in a small glass protected chamber. The oxygen is directly guided into the user’s body which allows the user to breathe fresh air even in the dirtiest parts of the city***.

*Due to mechanical limitations the replaced eye can not be used for looking at objects in very close proximity. This includes aiming rifles and small caliber weapons which do not come with a compatible D-KOR aim adapter

** Bandwidth limitations of the skin-to-neuro-interface only allow a perceived resolution of 2048 x 2048 at 30 FPS LDR. Full potential can be unlocked with the D-KOR optic nerve adapter (requires insertion by ripperdoc)

Features and Build

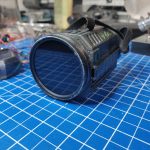

I started to work on this project as part of my this year’s Halloween costume. The party had to be cancelled due to Covid-19 and Cyberpunk 2077 got delayed, too, so i had some more time working on it and here’s the (current) result.

The set exists of two parts the eye piece and the control unit which is designed as the artificial lung. The eye piece holds an 1.5″ OLED display, which is hidden behind a 60 mm neutral dense camera filter. It also houses eight 3mm LEDs which are connected to a mcp23017 gpio expander which uses the i²c protocol. This allowed me to control every LED independently, without too many wires. Speaking about Wires, the OLED uses SPI and the LEDs I²C so a minimum of 9 wires connect the eye piece with the control unit. A light sensor in the eye piece allows reading simple environment data and scales the pupil accordingly (as of now, the sensor requires additional wires to access an analog pin on the pi). I’m also working on a gyro/accelerometer implementation, which allows the eye to focus on “real points” and behave like a real eye if the users moves his head.

In the long term I’m also thinking of controlling it with gestures, or a camera to track things. Unfortunately the device is too small to hide a camera in it and I don’t want it to be much bigger… (Maybe in the control unit?)

The control unit houses a raspberry Pi zero w and two RGB Leds which play a simple breathing animation. Power wise the pi (and so the whole setup) is connected to a 25800mAH power bank that I have in my pocket. My calculations indicate that this should be more than enough to have the setup powered for an evening.

Eye piece and control unit parts were 3d printed on my Anycubic i3 mega in black PLA. Then colored and weathered using acrylic colors (mainly back and anthracite)

The blue light was achieved using a cheap neon wire from ebay.

Initially I embedded an Arduino pro micro for the eye piece, so that I would not need the control unit, but I wasn’t satisfied with the frame rate it was able to produce on the OLED. So I switched to a raspberry pi zero w, that I still had around.

The source Code for the Arduino and Raspberry Pi Program can be found on my Github. Both use my ‘Natural-Eye-Behavior-Simulator’-Project which I already used for my (Portal Core Themed) Eye Animatronic. (Note: Sensor reading and is not, yet, in the public repository, as it’s not as polished, yet, as I want it to be)

The source Code for the Arduino and Raspberry Pi Program can be found on my Github. Both use my ‘Natural-Eye-Behavior-Simulator’-Project which I already used for my (Portal Core Themed) Eye Animatronic. (Note: Sensor reading and is not, yet, in the public repository, as it’s not as polished, yet, as I want it to be)

Links

Please be aware, that everything is provided “as is” and without any warranty or detailed support. If there’s a bug or you have ideas improvements I’ll be happy to help.

- Raspberry Pi Programm (C++):

https://github.com/Dak0r/Cyborg-1.5-Oled-Eye-RaspberryPi - Arduino Sketch:

https://github.com/Dak0r/Cyborg-1.5-Oled-Eye-Arduino - 3D Models:

https://www.thingiverse.com/thing:4678910 - If you like my work, please consider a donation:

https://paypal.me/dakor

Parts

For Amazon products, I generated Ref-Links for this parts list. If you buy anything from these, I get a small percentage but you still pay the same price.

- Raspberry Pi Zero: https://amzn.to/2VY4ryB

- 1.5″ Oled by Waveshare: https://amzn.to/36WgvqB

- MCP 23017 GPIO Expander: https://amzn.to/3gpj9rR

- 25800mAH PowerBank: https://amzn.to/3n13hyo

- 58mm ND Filter for a camera objective: https://amzn.to/4pX9D1T (snug fit)

- Neo Wire: (ebay) https://tinyurl.com/y6pzrg5n

- 8x 3mm LEDs for Eye Piece

- 2x RGB LEDs for Control Unit

- Wires, Soldering Iron etc.

1 Comment

Amy G · August 10, 2023 at 9:46 pm

Hi Daniel!! This build is amazing!! Is there any place I could learn more about it? I’d love to build something like it myself. Please email me if you are willing to, it is totally okay if not 😀